Over the past few years, after having spoken in a lot of individuals & teams in conferences & organizations, I realized the understanding of Agile and what does it mean to do effective Testing on Agile projects / teams is very poor.

So, we at ThoughtWorks, Pune, as part of vodQA Pune - Agile Testing Workshop, start 2016 with the objective of connecting with our peers in the Software industry to discuss and understand - "What is Agile and what does it mean to Test on Agile projects / teams?"

We have planned and organized this vodQA conference in a very Lean and simple way - announcement, registrations, agenda and updates - all directly from our vodQA group in facebook.

This edition of vodQA will be held on Saturday, 9th January, 2016 at the ThoughtWorks, Pune office on the 4th floor. For more details, see the vodQA event page in facebook.

Showing posts with label pune. Show all posts

Showing posts with label pune. Show all posts

Tuesday, January 5, 2016

Thursday, May 28, 2015

vodQA Pune - Innovations in Testing

vodQA Update - Agenda + Slides + Videos

Here is an update of the vodQA that went by at supersonic speed!

We had an intense and action-packed vodQA in ThoughtWorks, Pune on Saturday, 6th June 2015 - with the theme - Innovations in Testing!

Here are some highlights from the event:

- You can find the details of the agenda + links to slides & videos from here or here.

- After a record breaking attendee registrations (~500), we frantically closed off registrations. This meant around 140-180 people would show up based on historic attendance trends. 135 attendees made it to vodQA - the first person reaching office at 8.30am - when the event was supposed to start at 10am! That is enthusiasm!

- We had 45+ speaker submissions (and we had to reject more submissions because the registrations had already closed). After speaking to all submitters, and a lot of dry-runs and feedback, we eventually selected 6 talks, 4 lightning talks, 4 workshops from this massive list.

- We were unfortunately able to select only 2 external speakers (but it was purely based on the content + relevance to the theme). One of these speakers travelled all the way from Ahmedabad to Pune on his own for delivering a Lightning Talk.

- We had a few ThoughtWorkers travelling from Bangalore (2 speakers + 1 attendee) and 1 (speaker) from Gurgaon

- We had around 30-40 ThoughtWorkers participating in the conference.

- No event in the office can be possible without the amazing support from our Admin team + support staff!

- Overall - we had around 200 people in the office on a Saturday!

- For the first time, we did a live broadcasting of all the talks + lightning talks (NO workshops). This allowed people to connect with vodQA as it happened. Also - usually the last and most cumbersome thing from a post-event processing - uploading videos - was now the the first thing that was completed. See the videos here on youtube. This update got delayed because we still have to get the link to the slides :(

- We celebrated the 5th Birthday of vodQA!

- Even though most projects in TW Pune are running at 120+% delivery speed, we were able to pull this off amazingly well! This can only happen when individuals believe in what they are contributing towards. Thank you all!

- We wrapped up most of the post-event activities (office-cleanup, retro, post-vodQA dinner and now this update email) within 5 days of the vodQA day - another record by itself!

- Some pictures are attached with this email.

You can see the tweets and comments in the vodQA group on facebook.

Again, A HUGE THANKS to ALL those who participated in any way!

On behalf of the vodQA team + all the volunteers!

-----------------------------------------------------------------------------------------------------------------------------------------

[UPDATE]

Detail agenda, with expected learning and speaker information available here (http://vodqa-pune.weebly.com/agenda.html) for vodQA Pune - Innovations in Testing.

NOTE;

- Each workshop has limited # of seats.

- Registration for workshop will be done at the Attendee Registration Desk between 9am-10am on vodQA day.

- Registration will be on first-come-first choice basis.

- See each talk / workshop details (below) for pre-requisites, if any.

----------------

vodQA is back in ThoughtWorks, Pune on Saturday, 6th June 2015. This time the theme is - "Innovations in Testing".

We got a record number of submissions from wannabe speakers and HUGE number of attendee registrations. Selecting 12-14 talks from this list was no small task - but we had to take a lot of tough decisions.

The agenda is now published (see here - http://vodqa-pune.weebly.com/agenda.html) and we are looking forward to have a very rocking vodQA!

Labels:

#vodqa,

automation,

bigdata,

collaboration,

conference,

experiences,

innovation,

meetup,

mobile_testing,

opensource,

patterns,

performance,

pune,

test_pyramid,

testing,

testing_conference,

thoughtworks,

vodQA,

workshop

Monday, May 11, 2015

vodQA Geek Night in ThoughtWorks, Hyderabad - Client-side Performance Testing Workshop

I am conducting a workshop on "Client-side Performance Testing" in vodQA Geek Night, ThoughtWorks, Hyderabad from 6.30pm-8pm IST on Thursday, 14th May, 2015.

Visit this page to register!

Abstract of the workshop:

Visit this page to register!

Abstract of the workshop:

In this workshop, we will see the different dimensions of Performance Testing and Performance Engineering, and focus on Client-side Performance Testing.

Before we get to doing some Client-side Performance Testing activities, we will first understand how to look at client-side performance, and putting that in the context of the product under test. We will see, using a case study, the impact of caching on performance, the good & the bad! We will then experiment with some tools like WebPageTest and Page Speed to understand how to measure client-side performance.

Lastly - just understanding the performance of the product is not sufficient. We will look at how to automate the testing for this activity - using WebPageTest (private instance setup), and experiment with yslow - as a low-cost, programmatic alternative to WebPageTest.

Venue:

ThoughtWorks Technologies (India) Pvt Ltd.

3rd Floor, Apurupa Silpi,

Beside H.P. Petrol Bunk (KFC Building),

Gachibowli,

Hyderabad - 500032, India

3rd Floor, Apurupa Silpi,

Beside H.P. Petrol Bunk (KFC Building),

Gachibowli,

Hyderabad - 500032, India

Labels:

#vodqa,

automation,

automation_framework,

conference,

experiences,

hyderabad,

innovation,

learning,

meetup,

opensource,

performance,

pune,

ruby,

testing,

testing_conference,

thoughtworks,

vodQA,

vodQAHyd,

workshop

Thursday, March 19, 2015

Enabling CD & BDT in March 2015

I have been very busy off late .... and am enjoying it too! I am learning and doing a lot of interesting things in the Performance Testing / Engineering domain. I had no idea there are so many types of caching, and that there would be a need to do various different types of Monitoring for availability, client-side performance testing, Real User Monitoring, Server-side load testing and more ... it is a lot of fun being part of this aspect of Testing.

That said, I am equally excited about 2 talks coming up in the end-of-March 2015:

In such a fast moving environment, CI (Continuous Integration) and CD (Continuous Delivery) are now a necessity and not a luxury!

There are various practices that Organizations and Enterprises need to implement to enable CD. Testing (automation) is one of the important practices that needs to be setup correctly for CD to be successful.

Testing in Organizations on the CD journey is tricky and requires a lot of discipline, rigor and hard work. In Enterprises, the Testing complexity and challenges increase exponentially.

In this session, I am sharing my vision of the Test Strategy required to make successful the journey of an Enterprise on the path of implementing CD.

That said, I am equally excited about 2 talks coming up in the end-of-March 2015:

Enabling CD (Continuous Delivery) in Enterprises with Testing

- at Agile India 2015, on Friday, 27th March 2015 in Bangalore.Abstract

The key objectives of Organizations is to provide / derive value from the products / services they offer. To achieve this, they need to be able to deliver their offerings in the quickest time possible, and of good quality!In such a fast moving environment, CI (Continuous Integration) and CD (Continuous Delivery) are now a necessity and not a luxury!

There are various practices that Organizations and Enterprises need to implement to enable CD. Testing (automation) is one of the important practices that needs to be setup correctly for CD to be successful.

Testing in Organizations on the CD journey is tricky and requires a lot of discipline, rigor and hard work. In Enterprises, the Testing complexity and challenges increase exponentially.

In this session, I am sharing my vision of the Test Strategy required to make successful the journey of an Enterprise on the path of implementing CD.

Build the 'right' regression suite using Behavior Driven Testing (BDT) - a Workshop

- at vodQA Gurgaon, on Saturday, 28th March 2015 at ThoughtWorks, Gurgaon.Abstract

Behavior Driven Testing (BDT) is a way of thinking. It helps in identifying the 'correct' scenarios, in form of user journeys, to build a good and effective (manual & automation) regression suite that validates the Business Goals. We will learn about BDT, do some hands-on exercises in form of workshops to understand the concept better, and also touch upon some potential tools that can be used.

Learning outcomes

- Understand Behavior Driven Testing (BDT)

- Learn how to build a good and valuable regression suite for the product under test

- Learn different style of identifying / writing your scenarios that will validate the expected Business Functionality

- Automating tests identified using BDT approach will automate your Business Functionality

- Advantages of identifying Regression tests using BDT approach

Labels:

agile,

agileindia2015,

automation,

bangalore,

bdt,

collaboration,

conference,

experiences,

gurgaon,

meetup,

performance,

pune,

test_pyramid,

testing,

testing_conference,

thoughtworks,

vodQA,

workshop

Thursday, February 19, 2015

Experiences from webinar on "Build the 'right' regression suite using Behavior Driven Testing (BDT)"

I did a webinar on how to "Build the 'right' regression suite using Behavior Driven Testing (BDT)" for uTest Community Testers on 18th Feb 2015 (2pm ET).

The recording of the webinar is available here on utest site (http://university.utest.com/recorded-webinar-build-the-right-regression-suite-using-behavior-driven-testing-bdt/).

The slides I used in the webinar can be seen below, or available from slideshare.

Here are some of my experiences from the webinar:

The recording of the webinar is available here on utest site (http://university.utest.com/recorded-webinar-build-the-right-regression-suite-using-behavior-driven-testing-bdt/).

The slides I used in the webinar can be seen below, or available from slideshare.

Here are some of my experiences from the webinar:

- It was very difficult to do this webinar - from a timing perspective. It was scheduled from 2-3pm ET (which meant it was 12.30-1.30am IST). I could feel the fatigue in my voice when I heard the recording. I just hope the attendees did not catch that, and that it did not affect the effective delivery of the content.

- There were over 50 attendees in the webinar. Though I finished my content in about 38-40 minutes, the remaining 20 minutes was not sufficient to go through the questions. The questions itself were very good, and thought provoking for me.

- A webinar is a great way to create content and deliver it without a break - as a study material / course content. The challenge and the pressure is on the speaker to ensure that the flow is proper, and the session is well planned and structured. Here, there are no opportunities to tweak the content on the fly based on attendee comments / questions / body language.

- That said, I always find it much more challenging to do a webinar compared to a talk. Reason - in a talk, I can see the audience. This is a HUGE advantage. I can understand from their facial expressions, body language if what I am saying makes sense or not. I can have many interactions with them to make them more involved in the content - and make the session about them, instead of me just talking. I can spend more time on certain content, while skipping over some - depending on their comfort levels.

Labels:

agile,

automation,

bdt,

collaboration,

conference,

domain,

experiences,

innovation,

patterns,

pune,

punedashboard,

pyramid,

test_pyramid,

testing,

testing_conference,

thoughtworks,

utest,

webinar

Thursday, January 15, 2015

Reading does help bring about fearless change ...

[Update] The link to the article was wrong :( Corrected it now

I have shared my experience on How I Turned My Idea Into A Product on ThoughtWorks Insights. This proves (to me) that reading does help.

I have shared my experience on How I Turned My Idea Into A Product on ThoughtWorks Insights. This proves (to me) that reading does help.

Labels:

automation,

change,

collaboration,

feedback,

influence,

innovation,

learning,

lindarising,

opensource,

patterns,

pune,

punedashboard,

pyramid,

testing,

thoughtworks,

tta,

visualization

Saturday, November 22, 2014

To Deploy or Not to Deploy - decide using Test Trend Analyzer (TTA) in AgilePune 2014

I spoke on the topic - "To Deploy or Not to Deploy - decide using Test Trend Analyzer (TTA)" in Agile Pune, 2014.

The slides from the talk are available here, and the video is available here.

Below is some information about the content.

The key objectives of organizations is to provide / derive value from the products / services they offer. To achieve this, they need to be able to deliver their offerings in the quickest time possible, and of good quality!

In order for these organizations to to understand the quality / health of their products at a quick glance, typically a team of people scramble to collate and collect the information manually needed to get a sense of quality about the products they support. All this is done manually.

So in the fast moving environment, where CI (Continuous Integration) and CD (Continuous Delivery) are now a necessity and not a luxury, how can teams take decisions if the product is ready to be deployed to the next environment or not?

Test Automation across all layers of the Test Pyramid is one of the first building blocks to ensure the team gets quick feedback into the health of the product-under-test.

The next set of questions are:

The solution - TTA - Test Trend Analyzer

TTA is an open source product that becomes the source of information to give you real-time and visual insights into the health of the product portfolio using the Test Automation results, in form of Trends, Comparative Analysis, Failure Analysis and Functional Performance Benchmarking. This allows teams to take decisions on the product deployment to the next level using actual data points, instead of 'gut-feel' based decisions.

There are 2 sets of audience who will benefit from TTA:

1. Management - who want to know in real time what is the latest state of test execution trends across their product portfolios / projects. Also, they can use the data represented in the trend analysis views to make more informed decisions on which products / projects they need to focus more or less. Views like Test Pyramid View, Comparative Analysis help looking at results over a period of time, and using that as a data point to identify trends.

2. Team Members (developers / testers) - who want to do quick test failure analysis to get to the root cause analysis as quickly as possible. Some of the views - like Compare Runs, Failure Analysis, Test Execution Trend help the team on a day-to-day basis.

NOTE: TTA does not claim to give answers to the potential problems. It gives a visual representation of test execution results in different formats which allow team members / management to have more focussed conversations based on data points.

Some pictures from the talk ... (Thanks to Shirish)

The slides from the talk are available here, and the video is available here.

Below is some information about the content.

The key objectives of organizations is to provide / derive value from the products / services they offer. To achieve this, they need to be able to deliver their offerings in the quickest time possible, and of good quality!

In order for these organizations to to understand the quality / health of their products at a quick glance, typically a team of people scramble to collate and collect the information manually needed to get a sense of quality about the products they support. All this is done manually.

So in the fast moving environment, where CI (Continuous Integration) and CD (Continuous Delivery) are now a necessity and not a luxury, how can teams take decisions if the product is ready to be deployed to the next environment or not?

Test Automation across all layers of the Test Pyramid is one of the first building blocks to ensure the team gets quick feedback into the health of the product-under-test.

The next set of questions are:

- How can you collate this information in a meaningful fashion to determine - yes, my code is ready to be promoted from one environment to the next?

- How can you know if the product is ready to go 'live'?

- What is the health of you product portfolio at any point in time?

- Can you identify patterns and do quick analysis of the test results to help in root-cause-analysis for issues that have happened over a period of time in making better decisions to better the quality of your product(s)?

The solution - TTA - Test Trend Analyzer

TTA is an open source product that becomes the source of information to give you real-time and visual insights into the health of the product portfolio using the Test Automation results, in form of Trends, Comparative Analysis, Failure Analysis and Functional Performance Benchmarking. This allows teams to take decisions on the product deployment to the next level using actual data points, instead of 'gut-feel' based decisions.

There are 2 sets of audience who will benefit from TTA:

1. Management - who want to know in real time what is the latest state of test execution trends across their product portfolios / projects. Also, they can use the data represented in the trend analysis views to make more informed decisions on which products / projects they need to focus more or less. Views like Test Pyramid View, Comparative Analysis help looking at results over a period of time, and using that as a data point to identify trends.

2. Team Members (developers / testers) - who want to do quick test failure analysis to get to the root cause analysis as quickly as possible. Some of the views - like Compare Runs, Failure Analysis, Test Execution Trend help the team on a day-to-day basis.

NOTE: TTA does not claim to give answers to the potential problems. It gives a visual representation of test execution results in different formats which allow team members / management to have more focussed conversations based on data points.

Some pictures from the talk ... (Thanks to Shirish)

Labels:

agile,

agilepune2014,

automation,

collaboration,

conference,

feedback,

innovation,

opensource,

pune,

punedashboard,

pyramid,

ruby,

test_pyramid,

testing,

testing_conference,

thoughtworks,

tta,

visualization

Saturday, November 15, 2014

The decade of Selenium

Selenium has been around for over a decade now. ThoughtWorks has published an eBook on the occasion - titled - "Perspectives on Agile Software Testing". This eBook is available for free download.

I have written a chapter in the eBook - "Is Selenium Finely Aged Wine?"

An excerpt of this chapter is also published as a blog post on utest.com. You can find that here.

I have written a chapter in the eBook - "Is Selenium Finely Aged Wine?"

An excerpt of this chapter is also published as a blog post on utest.com. You can find that here.

Monday, November 10, 2014

Perspectives on Agile Software Testing

Inspired by Selenium's 10th Birthday Celebration, a bunch of ThoughtWorkers have compiled an anthology of essays on testing approaches, tools and culture by testers for testers.

This anthology of essays is available as an ebook, titled - "Perspectives on Agile Software Testing" which is now available for download from here on ThoughtWorks site. A simple registration, and you will be able to download the ebook.

Here are the contents of the ebook:

Enjoy the read, and looking forward for the feedback.

This anthology of essays is available as an ebook, titled - "Perspectives on Agile Software Testing" which is now available for download from here on ThoughtWorks site. A simple registration, and you will be able to download the ebook.

Here are the contents of the ebook:

Enjoy the read, and looking forward for the feedback.

Monday, October 13, 2014

vodQA - Breaking Boundaries in Pune

[UPDATE] - The event was a great success - despite the rain gods trying to dissuade participants to join in. For those who missed, or for those who want to revisit the talks you may have missed, the videos have been uploaded and available here on YouTube.

[UPDATE] - Latest count - >350 interested attendees for listening to speakers delivering 6 talks, 3 lightning talks and attending 3 workshops. Not to forget the fun and networking with a highly charged audience at the ThoughtWorks, Pune office. Be there, or be left out! :)

I am very happy to write that the next vodQA is scheduled in ThoughtWorks, Pune on 15th November 2014. The theme this time around is "Breaking Boundaries".

You can register as an attendee here, and register as a speaker here. You can submit more than one topic for speaker registration - just email vodqa-pune@thoughtworks.com with details on the topics.

I am very happy to write that the next vodQA is scheduled in ThoughtWorks, Pune on 15th November 2014. The theme this time around is "Breaking Boundaries".

You can register as an attendee here, and register as a speaker here. You can submit more than one topic for speaker registration - just email vodqa-pune@thoughtworks.com with details on the topics.

Thursday, July 31, 2014

Enabling Continuous Delivery (CD) in Enterprises with Testing

I spoke about "Enabling Continuous Delivery (CD) in Enterprises with Testing" in Unicom's World Conference on Next Generation Testing.

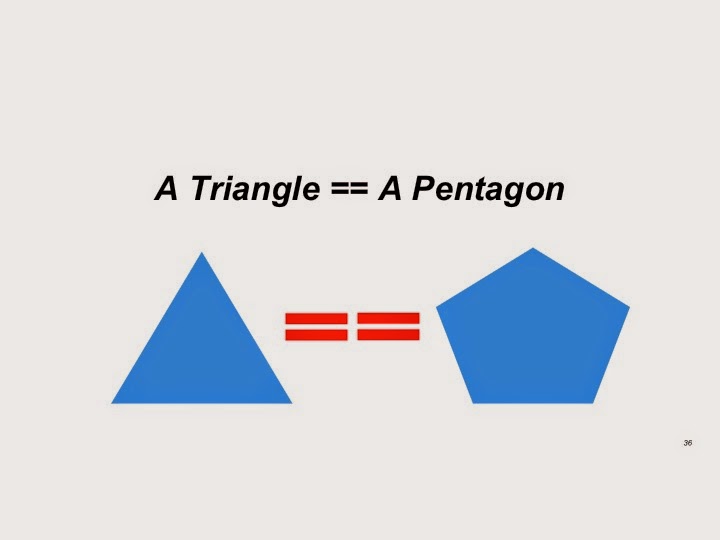

I started this talk by stating that I am going to prove that "A Triangle = A Pentagon".

I am happy to say that I was able to prove that "A Triangle IS A Pentagon" - in fact, left reasonable doubt in the audience mind that "A Triangle CAN BE an n-dimensional Polygon".

Confused? How is this related to Continuous Delivery (CD), or Testing? See the slides and the video from the talk to know more.

This topic is also available on ThoughtWorks Insights.

Below are some pictures from the conference.

I started this talk by stating that I am going to prove that "A Triangle = A Pentagon".

|

| A Triangle == A Pentagon?? |

I am happy to say that I was able to prove that "A Triangle IS A Pentagon" - in fact, left reasonable doubt in the audience mind that "A Triangle CAN BE an n-dimensional Polygon".

Confused? How is this related to Continuous Delivery (CD), or Testing? See the slides and the video from the talk to know more.

This topic is also available on ThoughtWorks Insights.

Below are some pictures from the conference.

Labels:

agile,

automation,

automation_framework,

bangalore,

collaboration,

conference,

domain,

feedback,

innovation,

meetup,

pune,

pyramid,

tdd,

test_pyramid,

testing,

testing_conference,

thoughtworks,

tta,

visualization

Thursday, April 10, 2014

Sample test automation framework using cucumber-jvm

I wanted to learn and experiment with cucumber-jvm. My approach was to think of a real **complex scenario that needs to be automated and then build a cucumber-jvm based framework to achieve the following goals:

So, without further ado, I introduce to you the cucumber-jvm-sample Test Automation Framework, hosted on github.

Feel free to fork and use this framework on your projects. If there are any other features you think are important to have in a Test Automation Framework, let me know. Even better would be to submit pull requests with those changes, which I will take a look at and accept if it makes sense.

** Pun intended :) The complex test I am talking about is a simple search using google search.

- Learn how cucumber-jvm works

- Create a bare-bone framework with all basic requirements that can be reused

So, without further ado, I introduce to you the cucumber-jvm-sample Test Automation Framework, hosted on github.

Following functionality is implemented in this framework:

- Tests specified using cucumber-jvm

- Build tool: Gradle

- Programming language: Groovy (for Gradle) and Java

- Test Data Management: Samples to use data-specified in feature files, AND use data from separate json files

- Browser automation: Using WebDriver for browser interaction

- Web Service automation: Using cxf library to generate client code from web service WSDL files, and invoke methods on the same

- Take screenshots on demand and save on disk

- Integrated cucumber-reports to get 'pretty' and 'meaningful' reports from test execution

- Using apache logger for storing test logs in files (and also report to console)

- Using aspectJ to do byte code injection to automatically log test trace to file. Also creating a separate benchmarks file to track time taken by each method. This information can be mapped separately in other tools like Excel to identify patterns of test execution.

Feel free to fork and use this framework on your projects. If there are any other features you think are important to have in a Test Automation Framework, let me know. Even better would be to submit pull requests with those changes, which I will take a look at and accept if it makes sense.

** Pun intended :) The complex test I am talking about is a simple search using google search.

Friday, March 28, 2014

WAAT Java v1.5.1 released today

After a long time, and with lot of push from collaborators and users of WAAT, I have finally updated WAAT (Java) and made a new release today.

You can get this new version - v1.5.1 directly from the project's dist directory.

Once I get some feedback, I will also update WAAT-ruby with these changes.

Here is the list of changes in WAAT_v1.5.1:

Thanks.

You can get this new version - v1.5.1 directly from the project's dist directory.

Once I get some feedback, I will also update WAAT-ruby with these changes.

Here is the list of changes in WAAT_v1.5.1:

Changes in v1.5.1

-

Engine.isExpectedTagPresentInActualTagList in engine class is made public

-

Updated Engine to work without creating testData.xml file, and directly

sending exceptedSectionList for tags

Added a new method Engine.verifyWebAnalyticsData(String actionName, ArrayListexpectedSectionList, String[] urlPatterns, int minimumNumberOfPackets) -

Added an empty constructor for Section.java to prevent marshalling error

-

Support Fragmented Packets

-

Updated Engine to support Pattern comparison, instead of String contains

Thanks.

Sunday, October 6, 2013

Offshore Testing on Agile Projects

Offshore Testing on Agile Projects …

Anand Bagmar

Reality of organizations

Organizations

are now spread across the world. With this spread, having distributed teams is

a reality. Reasons could be a combination of various factors, including:

|

Globalization

|

Cost

|

|

24x7

availability

|

Team size

|

|

Mergers

and Acquisitions

|

Talent

|

The Agile Software

methodology talks about various principles to approach Software Development. There

are various practices that can be applied to achieve these principles.

The choice

of practices is very significant and important in ensuring the success of the

project. Some of the parameters to consider, in no significant order are:

|

Skillset on the team

|

Capability on the team

|

|

Delivery objectives

|

Distributed

teams

|

|

Working with partners / vendors?

|

Organization Security / policy constrains

|

|

Tools for collaboration

|

Time overlap

time between teams

|

|

Mindset of team members

|

Communication

|

|

Test Automation

|

Project

Collaboration Tools

|

|

Testing Tools

|

Continuous Integration

|

** The above

list is from a Software Testing perspective.

This post is

about what practices we implemented as a team for an offshore testing project.

Case Study - A quick introduction

An enterprise had a B2B product providing an

online version of a physically conducted auction for selling used-vehicles, in

real-time and at high-speed. Typical participation in this auction is by an auctioneer,

multiple sellers, and potentially hundreds of buyers. Each sale can have up to

500 vehicles. Each vehicle gets sold / skipped in under 30 seconds - with multiple

buyers potentially bidding on it at the same time. Key business rules: only 1

bid per buyer, no consecutive bids by the same buyer.

Analysis and Development was happening across 3

locations – 2 teams in the US, and 1 team in Brazil. Only Testing was happening from Pune, India.

George Bernard Shaw said:

“Success does not consist in never making

mistakes but in never making the same one a second time.”

We took that to heart and very sincerely. We

applied all our learning and experiences in picking up the practices to make us

succeed. We consciously sought to creative, innovative and applied

out-of-the-box thinking on how we approached testing (in terms of strategy,

process, tools, techniques) for this unique, interesting and extremely

challenging application, ensuring we do not go down the same path again.

Challenges

We

had to over come many challenges for this project.

- Challenge in creating a common DSL that will be understood by ALL parties - i.e. Clients / Business / BAs / PMs / Devs / QAs

- All examples / forums talk using trivial problems - whereas we had lot of data and complex business scenarios to take care of.

- Cucumber / capybara / WebDriver / ruby do not allow an easy way to do concurrency / parallel testing

- We needed to simulate in our manual + automation tests for "n" participants at a time, interacting with the sale / auction

- A typical sale / auction can contains 60-500 buyers, 1-x sellers, 1 auctioneer. The sale / auction can contain anywhere from 50-1000 vehicles to sell. There can be multiple sales going on in parallel. So how do we test these scenarios effectively?

- Data creating / usage is a huge problem (ex: production subset snapshot is > 10GB (compressed) in size, refresh takes long time too,

- Getting a local environment in Pune to continue working effectively - all pairing stations / environment machines use RHEL Server 6.0 and are auto-configured using puppet. These machines are registered to the Client account on the RedHat Satellite Server.

- Communication challenge - We are working from 10K miles away - with a time difference of 9.5 / 10.5 hours (depending on DST) - this means almost 0 overlap with the distributed team. To add to that complexity, our BA was in another city in the US - so another time difference to take care of.

- End-to-end Performance / Load testing is not even a part of this scope - but something we are very vary of in terms of what can go wrong at that scale.

- We need to be agile - i.e. testing stories and functionality in the same iteration.

All the above-mentioned problems meant we had to come up with our

own unique way of tackling the testing.

Our principles - our North Star

We

stuck to a few guiding principles as our North Star:

- Keep it simple

- We know the goal, so evolve the framework - don't start building everything from step 1

- Keep sharing the approach / strategy / issues faced on regular basis with all concerned parties and make this a TEAM challenge instead of a Test team problem!

- Don't try to automate everything

- Keep test code clean

The End Result

At the end of the journey, here are some interesting

events from the off-shore testing project:

- Tests were specified in form of user journeys following the Behavior Driven Testing (BDT) philosophy – specified in Cucumber.

- Created a custom test framework (Cucumber, Capybara, WebDriver) that tests a real-time auction - in a very deterministic fashion.

- We had 65-70 tests in form of user journeys that covers the full automated regression for the product.

- Our regression completed in less than 30 minutes.

- We had no manual tests to be executed as part of regression.

- All tests (=user journeys) are documented directly in Cucumber scenarios and are automated

- Anything that is not part of the user journeys is pushed down to the dev team to automate (or we try to write automation at that lower level)

- Created a ‘special’ Long running test suite that simulates a real sale with 400 vehicles, >100 buyers, 2 sellers and an auctioneer.

- Created special concurrent (high speed parallel) tests that ensures even at highest possible load, the system is behaving correctly

- Since there was no separate performance and load test strategy, created special utilities in the automation framework, to benchmark “key” actions.

- No separate documentation or test cases ever written / maintained - never missed it too.

- A separate special sanity test that runs in production after deployment is done, to ensure all the integration points are setup properly

- Changed our work timings (for most team members) from 12pm - 9pm IST to get some more overlap, and remote pairing time with onsite team.

- Setup an ice-cream meter - for those that come late for standup.

Innovations and Customizations

Necessity

breeds innovation! This was so true in this project.

Below is a table listing all the different areas and specifics of

the customization we did in our framework.

Dartboard

Created a custom board “Dartboard” to quickly visualize

the testing status in the Iteration. See this post for more details: “Dartboard

– Are you on track?”

TaaS

To

automate the last mile of Integration Testing between different applications, we

created an open-source product – TaaS. This provides a platform / OS

/ Tool / Technology / Language agnostic way of Automating the Integrated

Tests between applications.

Base premise for TaaS:

Enterprise-sized organizations have multiple

products under their belt. The technology stack used for each of the product is

usually different – for various reasons.

Most of such organizations like to have a

common Test Automation solution across these products in an effort to

standardize the test automation framework.

However, this is not a good idea! If products in the same

organization can be built using different / varied technology stack, then why

should you pose this restriction on the Test Automation environment?

Each product should be tested using the tools

and technologies that are “right” for it.

“TaaS” is a product that allows you do

achieve the “correct” way of doing Test Automation.

WAAT - Web Analytics Automation Testing Framework

I had created the WAAT framework

for Java and Ruby in 2010/2011.

However this framework had a limitation - it did not work products what are

configured to work only in https mode.

For one of the applications, we need to do testing for WebTrends

reporting. Since this application worked only in https mode, I created a new

plugin for WAAT - JS Sniffer that can

work with https-only applications. See my blog for

more details about WAAT.

Subscribe to:

Posts (Atom)